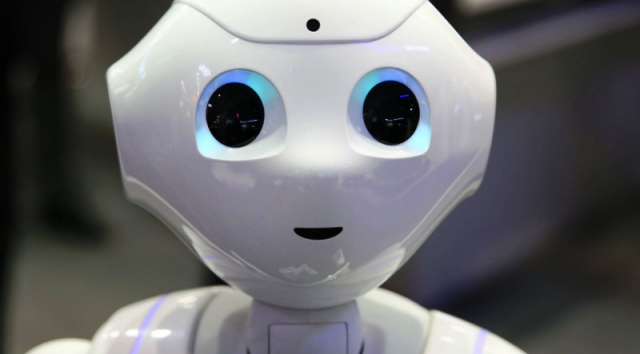

SAN FRANCISCO, CALIFORNIA | Eight computer science professors at the Oregon State University (OSU) have been tasked to make systems based on artificial intelligence (AI), such as autonomous vehicles and robots, more trustworthy.

Recent advances in autonomous systems that can perceive, learn, decide and act on their own stem from the success of the deep neural networks branch of AI, with deep-learning software mimicking the activity in layers of neurons in the neocortex, the part of the brain where thinking occurs.

The problem, however, is that the neural networks function as a black box. Instead of humans explicitly coding system behavior using traditional programming, in deep learning the computer program learns on its own from many examples.

Potential dangers arise from depending on a system that not even the system developers fully understand.

With 6.5 million U.S. dollars grant over the next four years from the Defense Advanced Research Projects Agency (DARPA), under its Explainable Artificial Intelligence program, a news release from OSU this week said the OSU researchers will develop a paradigm to look inside that black box, by getting the program to explain to humans how decisions were reached.

“Ultimately, we want these explanations to be very natural – translating these deep network decisions into sentences and visualizations,” Alan Fern, principal investigator for the grant and associate director of the OSU College of Engineering’s recently established Collaborative Robotics and Intelligent Systems Institute, was quoted as saying in a news release.

“Nobody is going to use these emerging technologies for critical applications until we are able to build some level of trust, and having an explanation capability is one important way of building trust.”

Such a system that communicates well with humans requires expertise in a number of research fields.

In addition to having researchers in artificial intelligence and machine learning, the OSU team includes experts in computer vision, human-computer interaction, natural language processing, and programming languages.

And to begin developing the system, the team will use real-time strategy games, like StarCraft, a staple of competitive electronic gaming, to train artificial-intelligence “players” that will explain their decisions to humans.

While later stages of the project will move on to applications provided by DARPA that may include robotics and unmanned aerial vehicles, Fern noted that the research is crucial to the advancement of autonomous and semi-autonomous intelligent systems.